FontRNN: Generating Large-scale Chinese Fonts via Recurrent Neural Network

1These authors contributed euqally to this work. 2Corresponding author.

Accepted by PG, Oct 2019.

Abstract

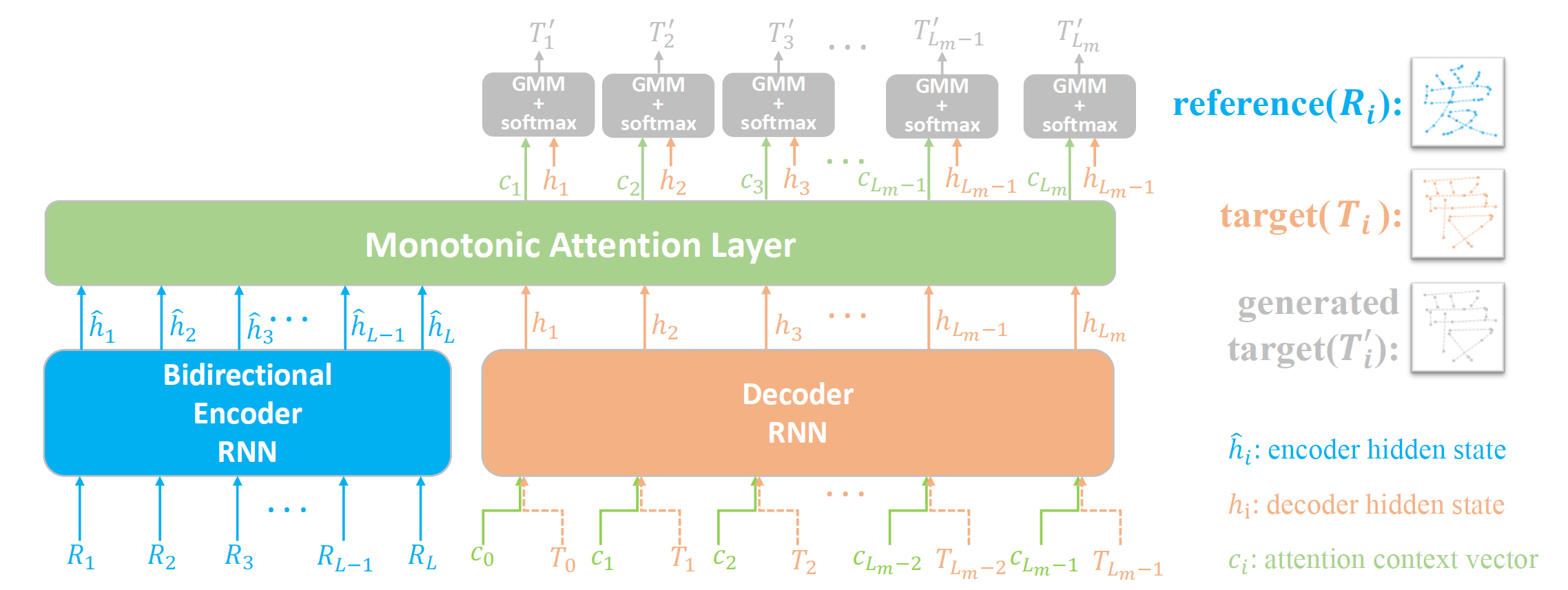

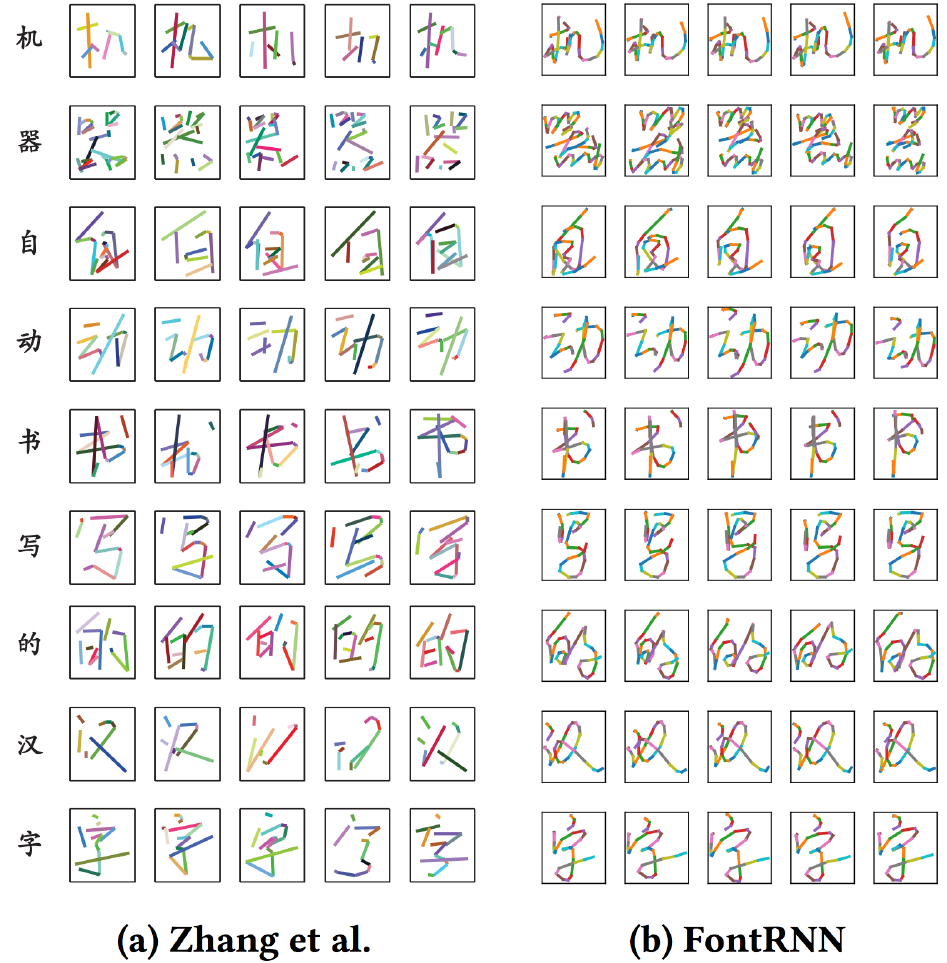

Despite the recent impressive development of deep neural networks, using deep learning based methods to generate large-scale Chinese fonts is still a rather challenging task due to the huge number of intricate Chinese glyphs, e.g., the official standard Chinese charset GB18030-2000 consists of 27,533 Chinese characters. Until now, most existing models for this task adopt Convolutional Neural Networks (CNNs) to generate bitmap images of Chinese characters due to CNN based models' remarkable success in various applications. However, CNN based models focus more on image-level features while usually ignore stroke order information when writing characters. Instead, we treat Chinese characters as sequences of points (i.e., writing trajectories) and propose to handle this task via an effective Recurrent Neural Network (RNN) model with monotonic attention mechanism, which can learn from as few as hundreds of training samples and then synthesize glyphs for remaining thousands of characters in the same style. Experimental results show that our proposed FontRNN can be used for synthesizing large-scale Chinese fonts as well as generating realistic Chinese handwritings efficiently.

Download

Experimental Results

|

Generated Skeletons |

|

|

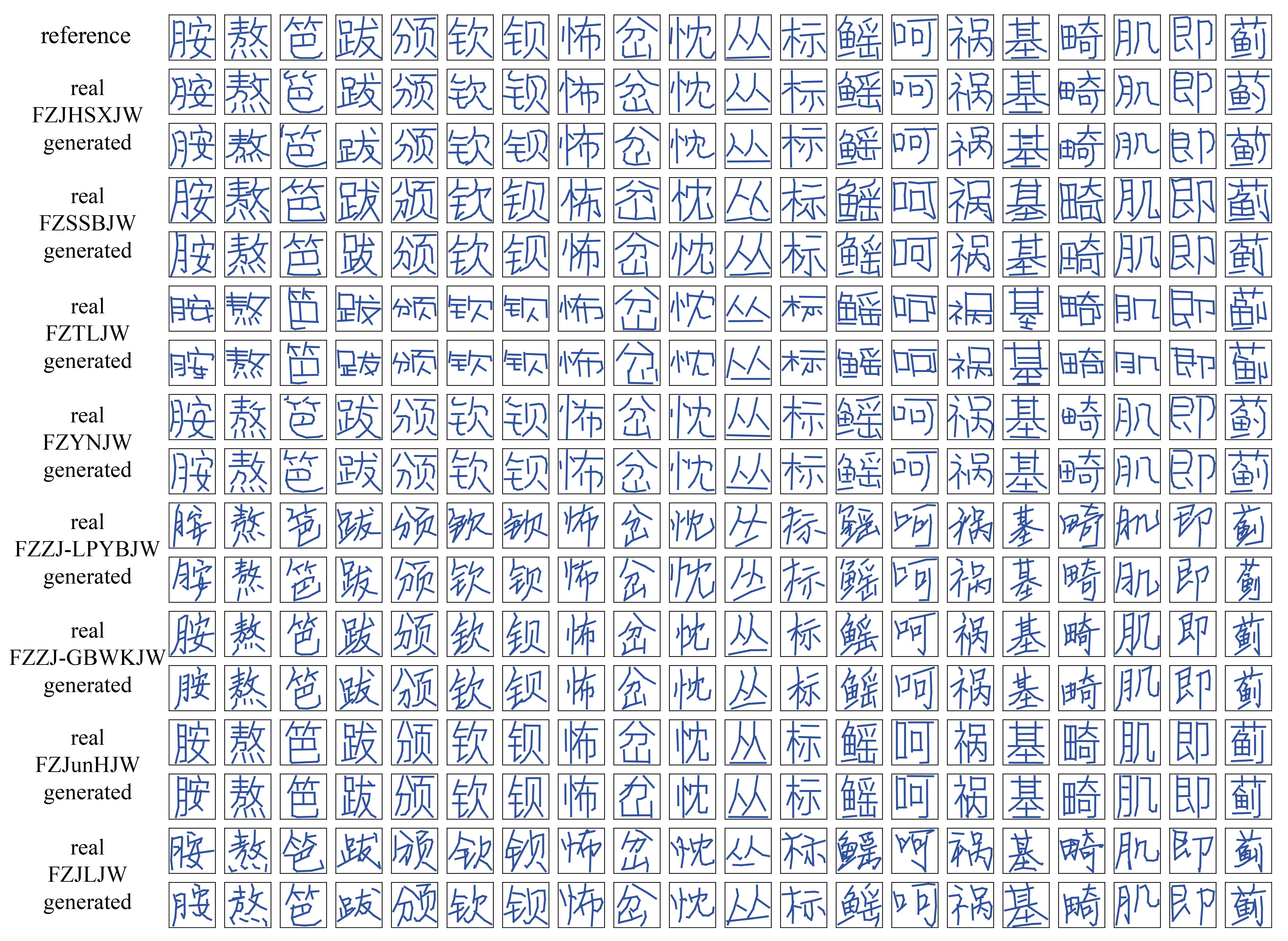

Character Synthesis |

|

|

Details at the Intersections |

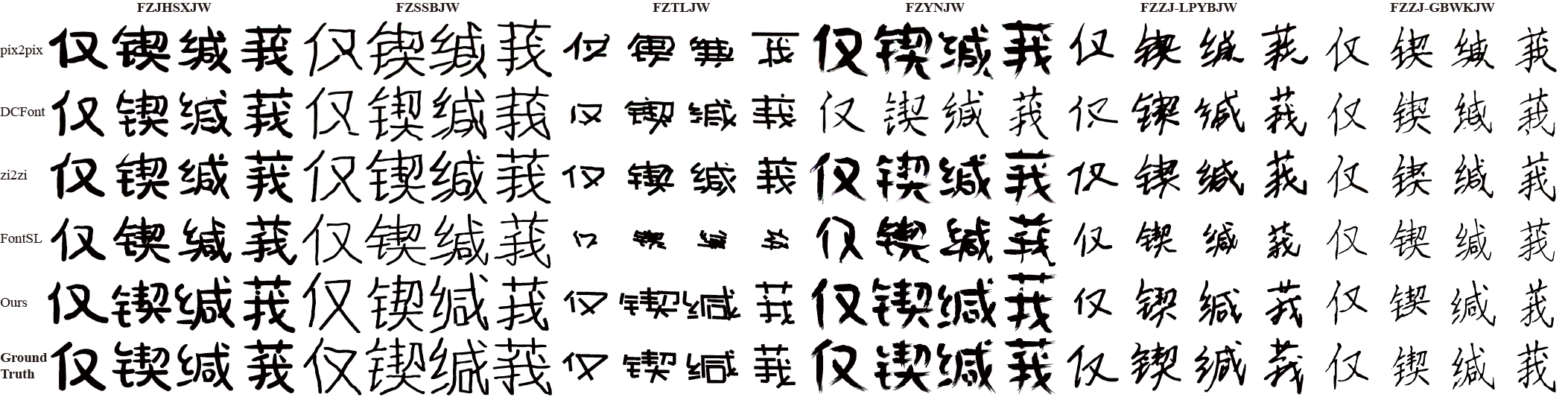

Comparsion with [ZYZ* 18] |

|

|

Citation

@article{CGF38-7:567-577:2019,

journal = {Computer Graphics Forum},

title = {{FontRNN: Generating Large-scale Chinese Fonts via Recurrent Neural Network}},

author = {Shusen Tang and Zeqing Xia and Zhouhui Lian and Yingmin Tang and Jianguo Xiao},

pages = {567-577},

volume= {38},

number= {7},

year = {2019},

note = {\URL{https://diglib.eg.org/bitstream/handle/10.1111/cgf13861/v38i7pp567-577.pdf}},

DOI = {10.1111/cgf.13861},

}

journal = {Computer Graphics Forum},

title = {{FontRNN: Generating Large-scale Chinese Fonts via Recurrent Neural Network}},

author = {Shusen Tang and Zeqing Xia and Zhouhui Lian and Yingmin Tang and Jianguo Xiao},

pages = {567-577},

volume= {38},

number= {7},

year = {2019},

note = {\URL{https://diglib.eg.org/bitstream/handle/10.1111/cgf13861/v38i7pp567-577.pdf}},

DOI = {10.1111/cgf.13861},

}